Moore’s Law comes to an end

High performance computing is embedded everywhere and virtually free

- Dateline

- 22 September 2022

Moore’s Law says, in essence, that computer processors become twice as powerful, and half the price, approximately every two years. Over time that sort of progression is exponential, and should eventually taper off.

Well, Moore’s Law is petering out. Not because of technical barriers, but because, at these low prices, there’s not much incentive to invest to keep innovating!

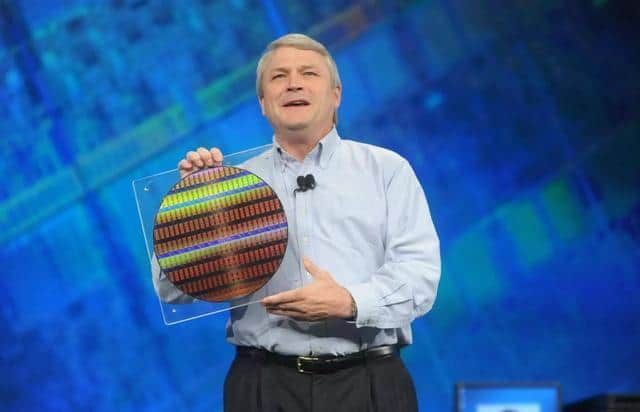

Focused on processor chips, cramming more and more chips onto a standard silicon wafer brings down the cost per chip. Wafer manufacturing has also benefited from the learning curve, and become more efficient. Economies of scale and sheer volume are where the profit lies.

But now chips are as cheap as, well, chips! And they are embedded into everything, from a pint of milk to a tube of toothpaste. So the milk can tell the fridge when it’s expired, and the toothpaste can put itself on your shopping list when it’s almost empty.

All of which makes life in the internet of things pretty cool and convenient. But what about those forgotten heroes, the chip manufacturers, who spent so much time, and made so much money, pursuing Moore’s Law to its ultimate conclusion?

Chips have become a commodity, and we all know there’s not much profit to be made from a commodity; it’s back to the brainstorming zone to find the next ‘killer app.’

And so Moore’s Law comes to a quiet end. Or does it? Not if Gilder’s Law and Metcalfe’s Law still hold true!

ANALYSIS >> SYNTHESIS: How this scenario came to be

Three laws that govern the spread of technology

Moore’s Law: formulated by Gordon Moore of Intel in the early 70’s – the processing power of a microchip doubles every 18 months; corollary, computers become faster and the price of a given level of computing power halves every 18 months.

Gilder’s Law: proposed by George Gilder, prolific author and prophet of the new technology age – the total bandwidth of communication systems triples every twelve months.

Metcalfe’s Law: attributed to Robert Metcalfe, originator of Ethernet and founder of 3COM – the value of a network is proportional to the square of the number of nodes; so, as a network grows, the value of being connected to it grows exponentially, while the cost per user remains the same or even reduces.

In truth, Moore’s Law only applies to microprocessors, but computing systems and infrastructure rely on much more, and increasingly on bandwidth and network size. These three laws not only complement each other, but work in harmony to create a virtuous cycle of increasing value and productivity, as high performance computing and networks become ever more pervasive.

We’ve heard of the phrases ‘bandwidth like water’ and ‘technology like air’ – as powerful, smart technology becomes more accessible and embedded in everything, we expect it to provide value, but treat it as a utility, nothing extraordinary. The ‘Net Generation’ already has this attitude.

It’s been a recurring theme for some years, predicted by Gordon Moore himself, that at some point physical barriers will prevent a never ending progression of Moore’s Law. But new nanotech developments and the possibility of ‘quantum computing’ could invalidate that prediction. Every year at Intel’s annual tech fest, the executives are at pains to say that Moore’s Law is still intact, as they show off the latest high-tech fabrication examples. But that kind of advancement takes huge R&D investment.

Likewise bandwidth seems to run up against barriers that are promptly broken. Fibre optics continue to get faster, measured in Terabits per second and wireless is advancing beyond 3G to 4G in a simple smartphone device. Even shortages of wireless spectrum seem insignificant, as new radio technologies emerge, and the visible light spectrum is tapped by smart LEDs, pulsing out a digital signal which we don’t even notice.

But the network effect is crucial to exponential utility at little or no cost. As more and more people and devices, machines and even ‘things’ join the network, so the burden of transmission is spread ever more thinly, and resources explode. In this scenario, we are way beyond all the people being connected; now every machine, appliance or mechanical system is ‘on net’ and many products and disposable items. Sensors and chips are so cheap they have become more disposable than the packaging, and we happily consume them as part of the product.

Which brings us to the final barrier – economics. Any exponential curve must flatten out eventually. Half the cost of nothing is still nothing, and miniscule gains may require gigantic investments to make a complete paradigm shift. Will consumer behaviour reward those big investments, or will new business models emerge? Like Google and Facebook, most of the value is actually provided by the users – the network itself – not the infrastructure and algorithms. Without participation by billions, these valuable utilities would become worthless. Will the same sort of mass adoption of embedded connected computing also ensure the survival of Moore’s Law way beyond the current scenario?

Links to related stories

- A wireless future where everything that computes is connected - Phys Org, 14 September 2012

- Moore's Law is safe for another decade - TechRadar, 12 September 2012

- MindBullet: GOODBYE SILICON, HELLO CARBON (Dateline: 27 March 2017, Published: 17 June 2010)

- MindBullet: ONE ATOM TO RULE THEM ALL (Dateline: 19 February 2019, Published: 05 January 2012)

- MindBullet: THE MICROWAVE GAVE MY TOASTER A VIRUS FOR CHRISTMAS (Dateline: 1 January 2013, Published: 29 December 2011)

Warning: Hazardous thinking at work

Despite appearances to the contrary, Futureworld cannot and does not predict the future. Our Mindbullets scenarios are fictitious and designed purely to explore possible futures, challenge and stimulate strategic thinking. Use these at your own risk. Any reference to actual people, entities or events is entirely allegorical. Copyright Futureworld International Limited. Reproduction or distribution permitted only with recognition of Copyright and the inclusion of this disclaimer.